The Heads Hypothesis: A Unifying Statistical Approach Towards Understanding Multi-Headed Attention in BERT

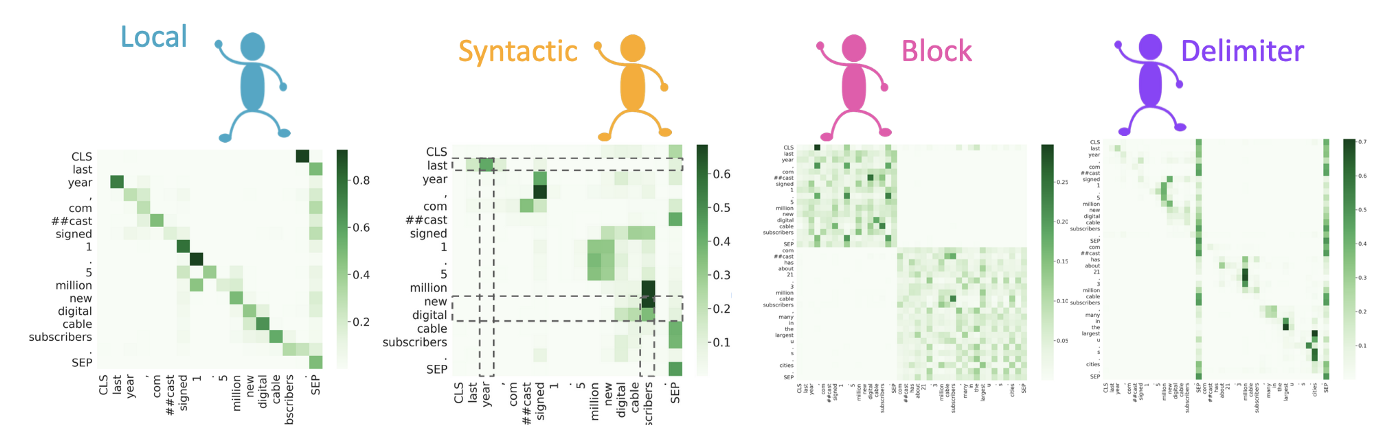

BERT has been a top contender in the space of NLP models. With its sucess, a parallel stream of research, named BERTology, has emerged, that tries to understand how does BERT work so well. With the similar objective, this blog explains a novel technique for analysing the behaviour of BERT’s attention heads. Read this for interesting insights which unravel the inner working of BERT!

5 min read