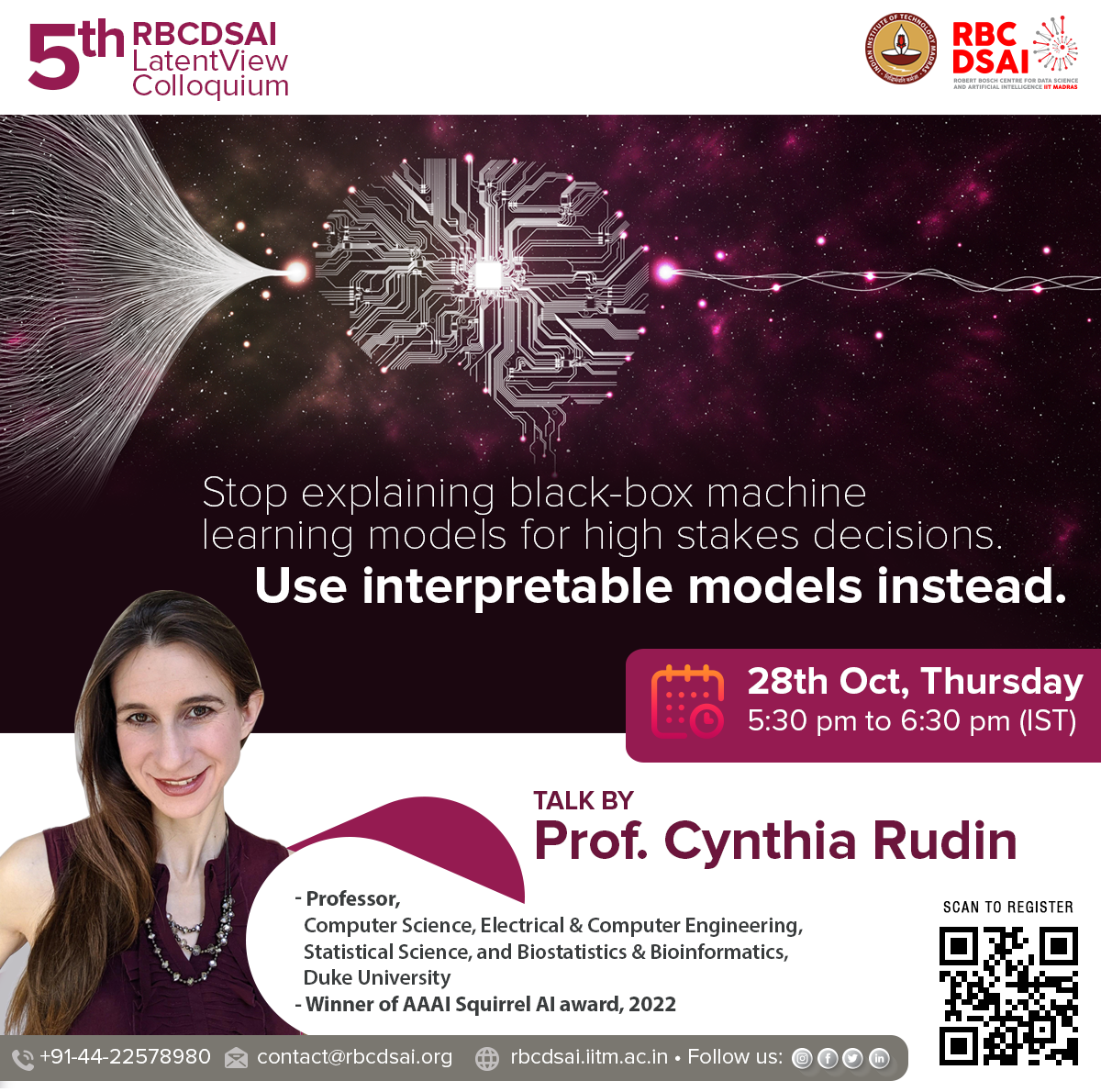

Accurate and Interpretable AI models: Towards Deployable AI

Mar 28, 2022

Accurate and Interpretable AI models: Towards Deployable AI

A self-driving car bumps into a lamppost. A doctor prescribes the wrong treatment to a patient based on an AI-based diagnostic tool. A missile is misfired by an AI-based defense system. An unfair decision provided by a banking chatbot leads to loss of customers.

The above examples are probably sufficient to explain why there is a need to tread carefully while deploying AI-based solutions in the real world. While wrong predictions made by recommendation systems in domains like retail might be inexpensive, such predictions in domains like healthcare, self-driving vehicles, banking or defense can cause hefty monetary losses or even loss of lives.

3 min read

Interpretable AI

--Prof. Cynthia Rudin--

For quite a long time, researchers and the public were thrilled by the wonders of machine learning. However, over the period of time, the community realized that the machine learning models aren’t a magic wand and they are as best as the data provided to them during the training and development stage. As the world started making decisions based on AI, there were soon conflicts between human and machine intelligence and therefore the need for explainability of black-box models become apparent.

5 min read